ContextBot

ContextBot stops the context rot

Your CLAUDE.md was accurate once. Hundreds of PRs later, it's full of stale instructions and your agent is a confused slop-machine. ContextBot fixes that.

Free during public beta. Install the GitHub App, and you'll have your first context-improvement PR ready for review in minutes.

Trusted by engineering teams

How it works

Weekly PR with context improvements

No new workflow to adopt. Install once, then review AI coding context changes like any other PR.

Install in two clicks

ContextBot is a GitHub App. Install it on your GitHub repositories in a few seconds, choose the repositories you want to improve, and you're ready to go.

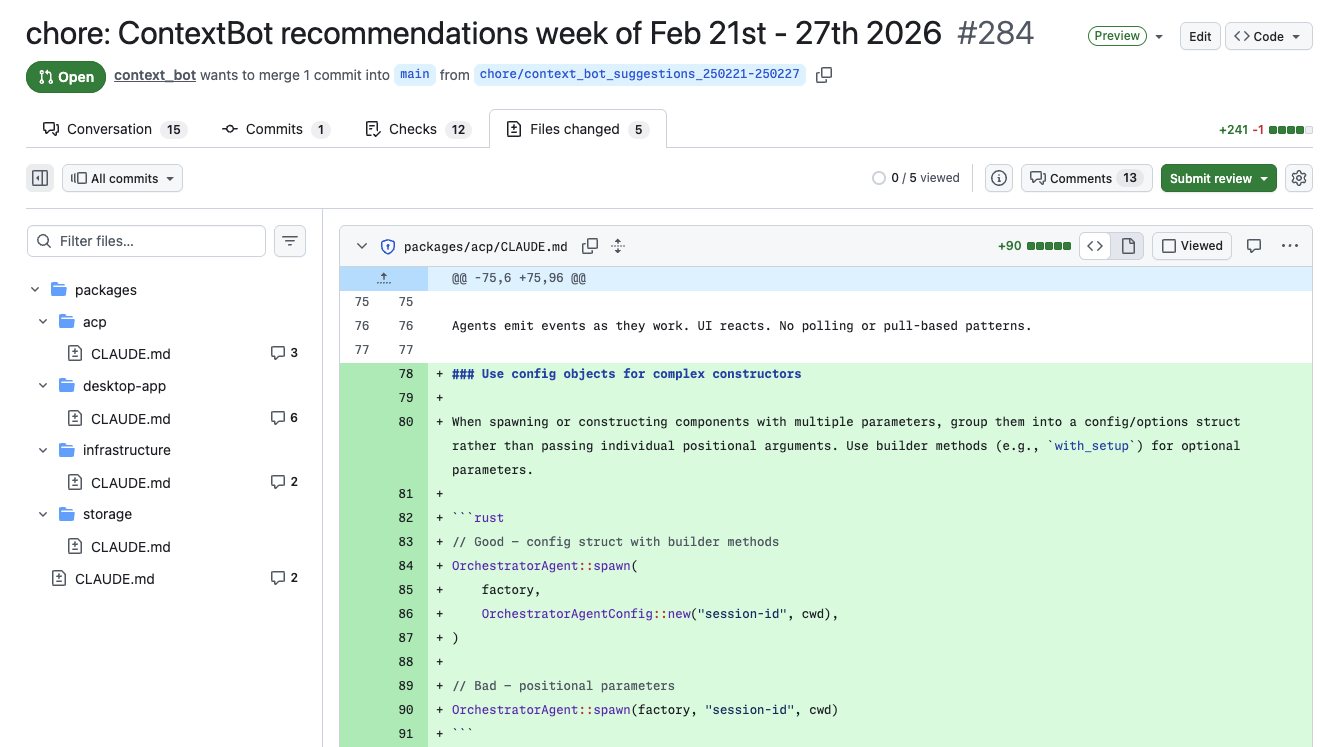

Get a weekly PR

ContextBot examines your pull requests and commits, extracts the conventions your team cares about, and opens a PR with recommended context updates.

Review like normal

Merge, comment, or close. ContextBot adapts from your feedback. You're in control of every change to your context files, just like any other PR.

Why ContextBot

Automatically improves coding context

Automated Context Curation

Extracts the conventions your team actually cares about from pull requests and commits, including implicit patterns from the code you merge, not just PR comments.

Stop Repeating Yourself

Stop correcting your coding agents again...and again...and again. ContextBot bakes your team's conventions into context files so corrections stick.

A Better "Next Session" Automatically

Low comment volume on a PR doesn't mean there's nothing to learn. The diff itself carries signal about how your team writes code. That's where the implicit conventions live.

Human-in-the-Loop

ContextBot opens PRs you can review, edit, or merge, just like any other code change. You stay in control.

See it in action

Real diffs, not vague suggestions

Every change is a reviewable PR with clear rationale. Merge what helps, skip what doesn't. ContextBot extracts high-signal conventions from your pull requests and commits, ranks insights, and avoids duplicate PR churn.

Supported Agents

Works with the tools your team already uses

Launching with

CLAUDE.mdAGENTS.mdAGENTS.mdFast follows

.cursor/rules.github/copilot-instructions.mdGEMINI.mdDon't see your agent? Let us know what you use.

Pricing

Free for up to 5 repos during beta

Starter

Free

Up to 5 repos during beta, then 1 repo

- Automated context update PRs

- Context improvements for up to 5 repositories during beta

- Standard GitHub review and merge workflow

Best for trying ContextBot on up to 5 repos during beta.

Team

Free (Beta)

Higher frequency and advanced workflows

- Higher PR frequency

- Pull-request signal learning and code drift analysis

- Documentation indexing and deeper optimization

Built for engineering teams scaling AI coding across repos.

Capability

Starter

Team

Repos

Context PR cadence

Learn from pull requests

Pull-request signal learning

Code drift analysis

Documentation indexing

Reach out if you need higher update frequency and deeper optimization.

Security & Privacy

Built for teams that care about security

Private LLM processing

Requests are processed through AWS Bedrock with enterprise privacy controls. Prompts and completions are not used to train foundation models or shared with model providers for training.

Least-privilege + org isolation

ContextBot uses minimal GitHub permissions: read code and PRs, write only to open PRs. Processing runs in isolated, org-scoped environments.

Ephemeral analysis

Code is analyzed only long enough to generate context updates and PRs. Repository code is not persisted beyond the active processing window.

ContextBot stops the context rot

Free during public beta. Install the GitHub App, and you'll have your first context-improvement PR in minutes.